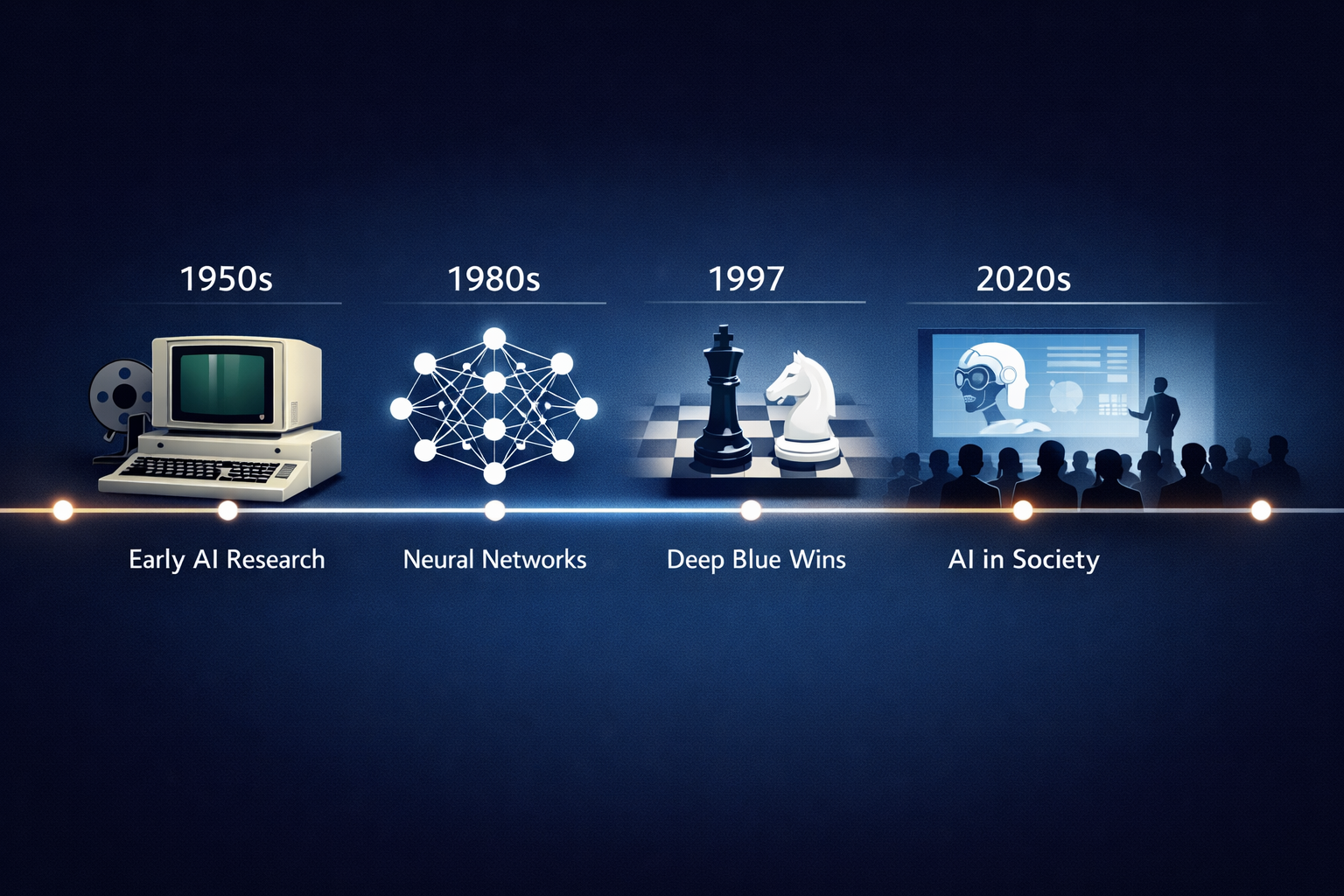

Artificial Intelligence (AI) has evolved from a speculative concept to a transformative force reshaping industries, society, and daily life. Its history is marked by breakthroughs, setbacks, and paradigm shifts that have collectively paved the way for the sophisticated AI systems we see today. Understanding these pivotal moments not only sheds light on how AI developed but also provides context for its current and future potential.

The Dartmouth Conference: The Birth of AI as a Field

In the summer of 1956, a group of scientists gathered at Dartmouth College for a workshop that would lay the foundation of AI as an academic discipline. Organized by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon, the Dartmouth Conference proposed that “every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.”

This ambitious hypothesis sparked the formal study of AI, catalyzing research efforts across universities and corporations. The conference introduced the term “artificial intelligence” and set a clear research agenda, focusing on problem-solving, language understanding, and symbolic reasoning.

Impact and Relevance

The Dartmouth Conference’s emphasis on symbolic AI—using logic and rules to mimic human reasoning—influenced decades of research. Although early AI systems struggled with real-world complexity, the conference established AI as a serious, multidisciplinary endeavor that combined computer science, psychology, linguistics, and mathematics.

Early AI Programs: From Logic Theorist to ELIZA

Following Dartmouth, pioneering programs showcased the potential of AI:

- Logic Theorist (1956): Developed by Allen Newell and Herbert A. Simon, this program proved mathematical theorems, demonstrating that machines could perform tasks requiring logical reasoning.

- ELIZA (1966): Created by Joseph Weizenbaum, ELIZA simulated conversation by mimicking a Rogerian psychotherapist. Although simple, it highlighted the possibilities and limitations of natural language processing.

These early AI programs inspired optimism but also revealed challenges, such as understanding context and the brittleness of rule-based systems.

The AI Winter: Lessons from Overpromising

Despite early enthusiasm, AI faced significant obstacles by the 1970s and 1980s. Expectations often exceeded technical realities, leading to periods known as the “AI winters,” characterized by reduced funding and skepticism.

Contributing factors included:

- Limitations of symbolic reasoning in handling uncertainty and ambiguity.

- Computational constraints of hardware available at the time.

- Failures to deliver practical applications at scale.

However, these setbacks also motivated researchers to explore alternative approaches, including probabilistic models and machine learning techniques.

The Rise of Machine Learning and Neural Networks

The 1980s and 1990s saw a resurgence in AI through the development of machine learning, where systems learn patterns from data rather than rely solely on programmed rules.

Key milestones include:

- Backpropagation Algorithm (1986): Popularized by David Rumelhart, Geoffrey Hinton, and Ronald Williams, backpropagation enabled multi-layer neural networks to adjust weights efficiently, sparking renewed interest in connectionist models.

- Support Vector Machines and Decision Trees: These algorithms provided powerful tools for classification and regression tasks, further advancing AI’s practical capabilities.

Machine learning shifted AI towards statistical methods, accommodating noise and complexity in real-world data.

Deep Learning and Modern AI Breakthroughs

In the 2010s, deep learning—a subset of machine learning using large, multi-layered neural networks—revolutionized AI by achieving unprecedented accuracy in tasks such as image recognition, speech processing, and natural language understanding.

Significant developments include:

- ImageNet Competition (2012): A convolutional neural network designed by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton dramatically outperformed traditional methods, accelerating the adoption of deep learning.

- Generative Models: Advances like Generative Adversarial Networks (GANs) and transformers have enabled realistic image synthesis and language generation, powering applications such as chatbots and creative AI.

Today, companies leverage AI in fields ranging from healthcare diagnostics to autonomous vehicles, marking a new era of integration between humans and intelligent systems.

Key Takeaways

- The Dartmouth Conference in 1956 formally established AI as a research field, focusing on symbolic reasoning.

- Early AI programs demonstrated potential but faced limitations that led to periods of reduced enthusiasm known as AI winters.

- Machine learning and neural networks introduced data-driven approaches, overcoming many challenges of symbolic AI.

- Deep learning breakthroughs in the 2010s led to significant practical applications, making AI a pervasive technology today.

- Understanding AI’s history helps contextualize current trends and future innovations in this rapidly evolving field.

Related Resources

- AAAI AI History – An authoritative overview of AI’s historical milestones by the Association for the Advancement of Artificial Intelligence.

- Stanford Encyclopedia of Philosophy: Artificial Intelligence – A comprehensive and philosophical perspective on AI’s development and foundational concepts.

- DeepMind Research Highlights – Insight into cutting-edge AI research from one of the leading AI labs, illustrating modern breakthroughs.

- Nature: The AI Revolution – An accessible article discussing the impact of deep learning on AI’s resurgence and future directions.

- Encyclopedia Britannica: Artificial Intelligence – A well-rounded introduction covering AI’s history, types, and applications.